Lei Xie is a Professor at Northwestern Polytechnical University, where he leads the Audio, Speech and Language Processing Lab (ASLP@NPU). His research focuses on speech processing, conversational AI, and neural models for speech and language technologies, with work spanning speech enhancement, automatic speech recognition, and speech synthesis.

He is also committed to building open-source tools and data resources for the research community, including the widely used WeNet toolkit and the WenetSpeech open-data series.

Professor Xie has published over 400 papers, received more than 17,000 Google Scholar citations, and has an H-index of 62. His work has received multiple best paper awards, won international challenge championships, and has been translated into industrial applications. He currently serves as Vice Chairperson of ISCA SIG-CSLP and Senior Area Editor for IEEE/ACM TASLP and IEEE SPL.

Full Biography

Lei Xie is a Professor at the School of Computer Science, Northwestern Polytechnical University (NPU), where he leads the Audio, Speech and Language Processing Lab (ASLP@NPU). His research focuses on speech processing, conversational AI, advanced neural models for speech and language technologies and large audio/speech language models, with contributions spanning speech enhancement, automatic speech recognition, speech synthesis and spoken dialogue systems.

He is also committed to advancing open-source research infrastructure for the community, leading projects such as the widely used WeNet speech recognition toolkit and the WenetSpeech open-data series.

Dr. Xie received his Ph.D. in Computer Engineering from NPU, where his doctoral research focused on speech recognition. Before joining NPU as a faculty member, he held research positions at Vrije Universiteit Brussel, City University of Hong Kong, and The Chinese University of Hong Kong.

He has received several honors and recognitions, including the New Century Excellent Talents Program of the Ministry of Education of China, the Shaanxi Young Science and Technology Star Award, recognition as one of the World’s Top 2% Scientists (Stanford University & Elsevier), and the title of Huawei Cloud AI Distinguished Teacher.

Professor Xie has published over 400 peer-reviewed papers in audio, speech, and language processing, with more than 17,000 citations on Google Scholar and an H-index of 62. His work has received multiple best paper awards at international conferences and won several international challenge championships. A number of his research outcomes have also been successfully translated into real-world industrial applications.

At ASLP@NPU, he mentors a diverse group of students and researchers working at the intersection of speech, audio, and language intelligence. He is also an active contributor to the research community, serving in leadership and editorial roles. He currently serves as Vice Chairperson of the ISCA Special Interest Group on Chinese Spoken Language Processing (SIG-CSLP) and as Senior Area Editor for both IEEE/ACM Transactions on Audio, Speech, and Language Processing and IEEE Signal Processing Letters.

News

| Apr 10, 2026 |

The 2026 master’s cohort graduated successfully and joined top companies such as Alibaba, Tencent, and JD.com. Congratulations!

|

| Apr 07, 2026 |

WenetSpeech-Wu - The largest Wu Chinese dataset to date, accepted by ACL2026.

|

| Apr 07, 2026 |

LLM-forced Aligner, the technology behind Qwen3-Qwen/Qwen3-ForcedAligner, accepted by ACL2026

|

| Mar 17, 2026 |

4 papers accepted by ICME2026

|

| Jan 18, 2026 |

8 papers accepted by ICASSP2026

|

| Jan 08, 2026 |

VoiceSculptor, a voice design model, now open-sourced

|

Lab

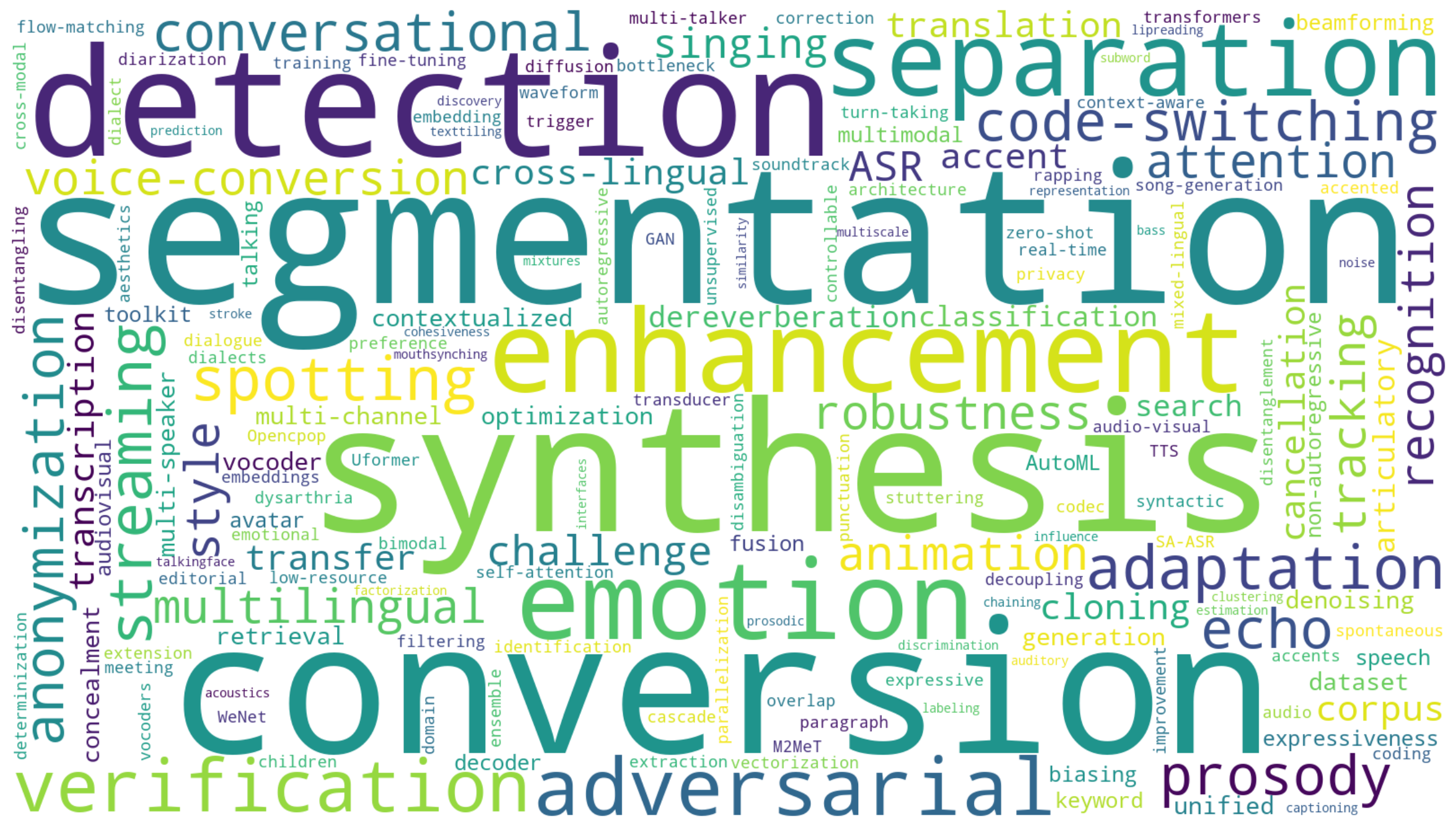

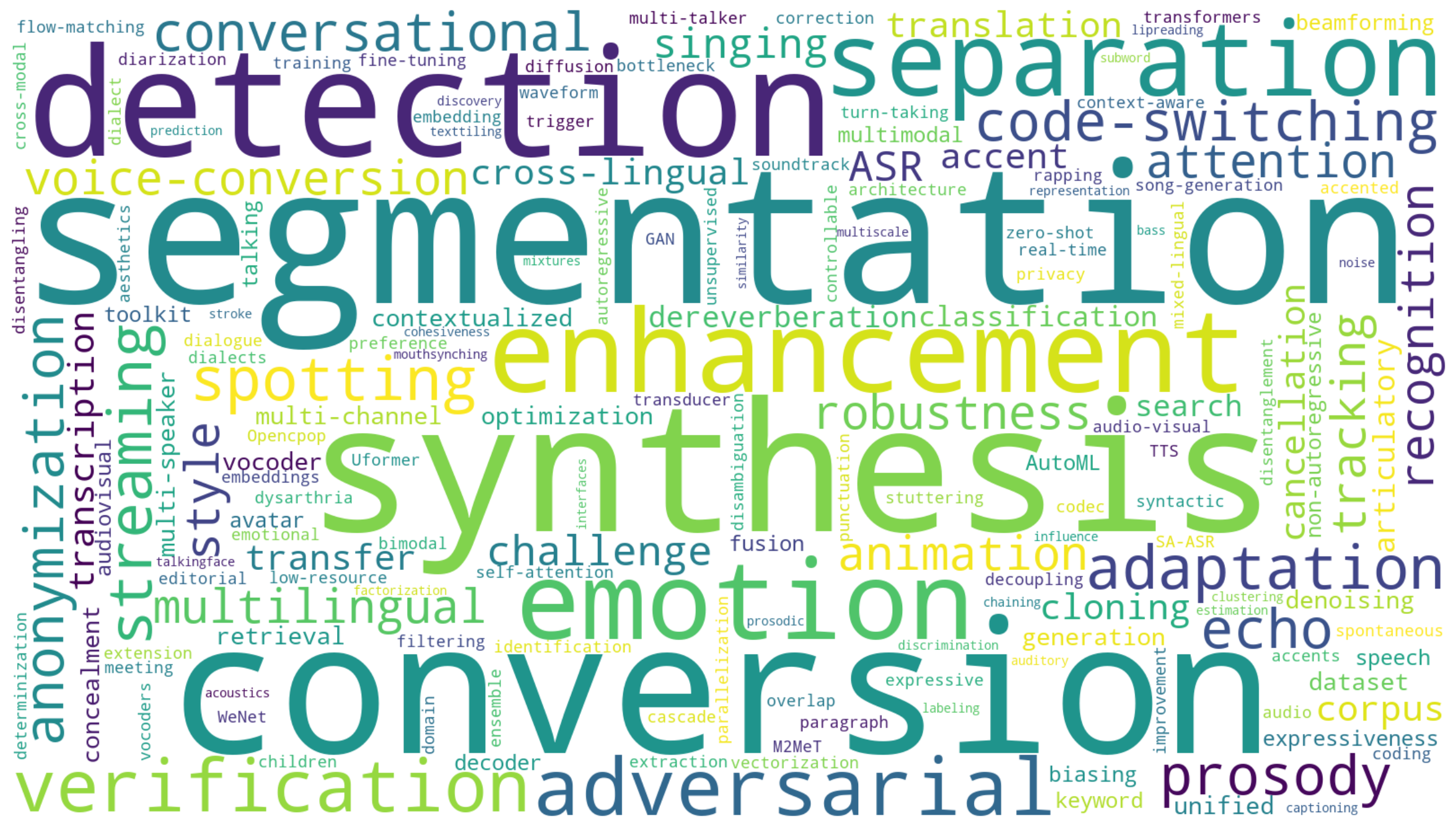

The Audio, Speech and Language Processing Lab (ASLP@NPU), led by Prof. Lei Xie at Northwestern Polytechnical University, is widely recognized as one of the leading research groups in speech, audio, and language technologies. The lab conducts cutting-edge research spanning speech recognition, speech synthesis, speech enhancement, spoken dialogue systems, and emerging audio language models, with a strong commitment to both scientific innovation and real-world impact.

ASLP@NPU places equal emphasis on research excellence and practical deployment, and has maintained close and long-term collaborations with industry. Many of its research outcomes have been successfully translated into real applications, while its open-source platforms and data resources — including WeNet and WenetSpeech — have been widely adopted by both academia and industry.

The lab has also played an important role in cultivating talent for the broader AI and speech community, with many alumni becoming technical leaders, senior researchers, and key engineering contributors in leading technology companies and research institutions.

By combining academic depth, engineering strength, and industrial relevance, ASLP@NPU continues to advance the frontier of speech intelligence and next-generation human–machine communication.

Recent Popular Open-source Projects

-

SoulX-Podcast — Inference codebase for generating high-fidelity podcasts from text with multi-speaker multi-dialect support

-

DiffRhythm — End-to-end full-length song generation via latent diffusion

-

OSUM — Open speech understanding model for limited academic resources

-

SongEval — Aesthetic evaluation toolkit for generated songs

-

WenetSpeech-Yue — Large-scale Cantonese speech corpus with multi-dimensional annotation

-

MeanVC — Lightweight and streaming zero-shot voice conversion via mean flows

-

VoiceSculptor — Instruct text-to-speech solution based on LLaSA and CosyVoice2

-

WenetSpeech-Chuan — Large-scale Sichuanese dialect speech corpus

-

DiffRhythm2 — Efficient high-fidelity song generation via block flow matching

-

WenetSpeech-Wu-Repo — Large-scale Wu dialect speech corpus with multi-dimensional annotation

Recent Publications

-

Summary on The Multilingual Conversational Speech Language Model Challenge: Datasets, Tasks, Baselines, and Methods

Bingshen

Mu, Pengcheng

Guo, Zhaokai

Sun, Shuai

Wang, Hexin

Liu, Mingchen

Shao, and

5 more authors

In ICASSP, 2026

This paper summarizes the Interspeech2025 Multilingual Conversational Speech Language Model (MLC-SLM) challenge, which aims to advance the exploration of building effective multilingual conversational speech LLMs (SLLMs). We provide a detailed description of the task settings for the MLC-SLM challenge, the released real-world multilingual conversational speech dataset totaling approximately 1,604 hours, and the baseline systems for participants. The MLC-SLM challenge attracts 78 teams from 13 countries to participate, with 489 valid leaderboard results and 14 technical reports for the two tasks. We distill valuable insights on building multilingual conversational SLLMs based on submissions from participants, aiming to contribute to the advancement of the community.

-

WenetSpeech-Chuan: A Large-Scale Sichuanese Corpus with Rich Annotation for Dialectal Speech Processing

Yuhang

Dai, Ziyu

Zhang, Shuai

Wang, Longhao

Li, Zhao

Guo, Tianlun

Zuo, and

10 more authors

In ICASSP, 2026

The scarcity of large-scale, open-source data for dialects severely hinders progress in speech technology, a challenge particularly acute for the widely spoken Sichuanese dialects of Chinese. To address this critical gap, we introduce WenetSpeech-Chuan, a 10,000-hour, richly annotated corpus constructed using our novel Chuan-Pipeline, a complete data processing framework for dialectal speech. To facilitate rigorous evaluation and demonstrate the corpus’s effectiveness, we also release high-quality ASR and TTS benchmarks, WenetSpeech-Chuan-Eval, with manually verified transcriptions. Experiments show that models trained on WenetSpeech-Chuan achieve state-of-the-art performance among open-source systems and demonstrate results comparable to commercial services. As the largest open-source corpus for Sichuanese dialects, WenetSpeech-Chuan not only lowers the barrier to research in dialectal speech processing but also plays a crucial role in promoting AI equity and mitigating bias in speech technologies. The corpus, benchmarks, models, and receipts are publicly available on our project page.

-

Towards Building Speech Large Language Models for Multitask Understanding in Low-Resource Languages

Mingchen

Shao, Bingshen

Mu, Chengyou

Wang, Hai

Li, Ying

Yan, Zhonghua

Fu, and

1 more author

In ICASSP, 2026

Speech large language models (SLLMs) built on speech encoders, adapters, and LLMs demonstrate remarkable multitask understanding performance in high-resource languages such as English and Chinese. However, their effectiveness substantially degrades in low-resource languages such as Thai. This limitation arises from three factors: (1) existing commonly used speech encoders, like the Whisper family, underperform in low-resource languages and lack support for broader spoken language understanding tasks; (2) the ASR-based alignment paradigm requires training the entire SLLM, leading to high computational cost; (3) paired speech-text data in low-resource languages is scarce. To overcome these challenges in the low-resource language Thai, we introduce XLSR-Thai, the first self-supervised learning (SSL) speech encoder for Thai. It is obtained by continuously training the standard SSL XLSR model on 36,000 hours of Thai speech data. Furthermore, we propose U-Align, a speech-text alignment method that is more resource-efficient and multitask-effective than typical ASR-based alignment. Finally, we present Thai-SUP, a pipeline for generating Thai spoken language understanding data from high-resource languages, yielding the first Thai spoken language understanding dataset of over 1,000 hours. Multiple experiments demonstrate the effectiveness of our methods in building a Thai multitask-understanding SLLM. We open-source XLSR-Thai and Thai-SUP to facilitate future research.

-

MeanVC: Lightweight and Streaming Zero-Shot Voice Conversion via Mean Flows

Guobin

Ma, Jixun

Yao, Ziqian

Ning, Yuepeng

Jiang, Lingxin

Xiong, Lei

Xie, and

1 more author

In ICASSP, 2026

Zero-shot voice conversion (VC) aims to transfer timbre from a source speaker to any unseen target speaker while preserving linguistic content. Growing application scenarios demand models with streaming inference capabilities. This has created a pressing need for models that are simultaneously fast, lightweight, and high-fidelity. However, existing streaming methods typically rely on either autoregressive (AR) or non-autoregressive (NAR) frameworks, which either require large parameter sizes to achieve strong performance or struggle to generalize to unseen speakers. In this study, we propose MeanVC, a lightweight and streaming zero-shot VC approach. MeanVC introduces a diffusion transformer with a chunk-wise autoregressive denoising strategy, combining the strengths of both AR and NAR paradigms for efficient streaming processing. By introducing mean flows, MeanVC regresses the average velocity field during training, enabling zero-shot VC with superior speech quality and speaker similarity in a single sampling step by directly mapping from the start to the endpoint of the flow trajectory. Additionally, we incorporate diffusion adversarial post-training to mitigate over-smoothing and further enhance speech quality. Experimental results demonstrate that MeanVC significantly outperforms existing zero-shot streaming VC systems, achieving superior conversion quality with higher efficiency and significantly fewer parameters. Audio demos and code are publicly available at https://aslp-lab.github.io/MeanVC.

-

S²Voice: Style-Aware Autoregressive Modeling with Enhanced Conditioning for Singing Style Conversion

Ziqian

Wang, Xianjun

Xia, Chuanzeng

Huang, and Lei

Xie

In ICASSP, 2026

We present S^2Voice, the winning system of the Singing Voice Conversion Challenge (SVCC) 2025 for both the in-domain and zero-shot singing style conversion tracks. Built on the strong two-stage Vevo baseline, S^2Voice advances style control and robustness through several contributions. First, we integrate style embeddings into the autoregressive large language model (AR LLM) via a FiLM-style layer-norm conditioning and a style-aware cross-attention for enhanced fine-grained style modeling. Second, we introduce a global speaker embedding into the flow-matching transformer to improve timbre similarity. Third, we curate a large, high-quality singing corpus via an automated pipeline for web harvesting, vocal separation, and transcript refinement. Finally, we employ a multi-stage training strategy combining supervised fine-tuning (SFT) and direct preference optimization (DPO). Subjective listening tests confirm our system’s superior performance: leading in style similarity and singer similarity for Task 1, and across naturalness, style similarity, and singer similarity for Task 2. Ablation studies demonstrate the effectiveness of our contributions in enhancing style fidelity, timbre preservation, and generalization. Audio samples are available \footnotehttps://honee-w.github.io/SVC-Challenge-Demo/.

-

The ICASSP 2026 Automatic Song Aesthetics Evaluation Challenge

Guobin

Ma, Yuxuan

Xia, Jixun

Yao, Huixin

Xue, Hexin

Liu, Shuai

Wang, and

2 more authors

In ICASSP, 2026

This paper summarizes the ICASSP 2026 Automatic Song Aesthetics Evaluation (ASAE) Challenge, which focuses on predicting the subjective aesthetic scores of AI-generated songs. The challenge consists of two tracks: Track 1 targets the prediction of the overall musicality score, while Track 2 focuses on predicting five fine-grained aesthetic scores. The challenge attracted strong interest from the research community and received numerous submissions from both academia and industry. Top-performing systems significantly surpassed the official baseline, demonstrating substantial progress in aligning objective metrics with human aesthetic preferences. The outcomes establish a standardized benchmark and advance human-aligned evaluation methodologies for modern music generation systems.

-

The ICASSP 2026 HumDial Challenge: Benchmarking Human-like Spoken Dialogue Systems in the LLM Era

Zhixian

Zhao, Shuiyuan

Wang, Guojian

Li, Hongfei

Xue, Chengyou

Wang, Shuai

Wang, and

10 more authors

In ICASSP, 2026

Driven by the rapid advancement of Large Language Models (LLMs), particularly Audio-LLMs and Omni-models, spoken dialogue systems have evolved significantly, progressively narrowing the gap between human-machine and human-human interactions. Achieving truly “human-like” communication necessitates a dual capability: emotional intelligence to perceive and resonate with users’ emotional states, and robust interaction mechanisms to navigate the dynamic, natural flow of conversation, such as real-time turn-taking. Therefore, we launched the first Human-like Spoken Dialogue Systems Challenge (HumDial) at ICASSP 2026 to benchmark these dual capabilities. Anchored by a sizable dataset derived from authentic human conversations, this initiative establishes a fair evaluation platform across two tracks: (1) Emotional Intelligence, targeting long-term emotion understanding and empathetic generation; and (2) Full-Duplex Interaction, systematically evaluating real-time decision-making under “ listening-while-speaking” conditions. This paper summarizes the dataset, track configurations, and the final results.

-

Easy Turn: Integrating Acoustic and Linguistic Modalities for Robust Turn-Taking in Full-Duplex Spoken Dialogue Systems

Guojian

Li, Chengyou

Wang, Hongfei

Xue, Shuiyuan

Wang, Dehui

Gao, Zihan

Zhang, and

5 more authors

In ICASSP, 2026

Full-duplex interaction is crucial for natural human-machine communication, yet remains challenging as it requires robust turn-taking detection to decide when the system should speak, listen, or remain silent. Existing solutions either rely on dedicated turn-taking models, most of which are not open-sourced. The few available ones are limited by their large parameter size or by supporting only a single modality, such as acoustic or linguistic. Alternatively, some approaches finetune LLM backbones to enable full-duplex capability, but this requires large amounts of full-duplex data, which remain scarce in open-source form. To address these issues, we propose Easy Turn, an open-source, modular turn-taking detection model that integrates acoustic and linguistic bimodal information to predict four dialogue turn states: complete, incomplete, backchannel, and wait, accompanied by the release of Easy Turn trainset, a 1,145-hour speech dataset designed for training turn-taking detection models. Compared to existing open-source models like TEN Turn Detection and Smart Turn V2, our model achieves state-of-the-art turn-taking detection accuracy on our open-source Easy Turn testset. The data and model will be made publicly available on GitHub.

-

DiffRhythm+: Controllable and Flexible Full-Length Song Generation with Preference Optimization

Huakang

Chen, Yuepeng

Jiang, Guobin

Ma, Chunbo

Hao, Shuai

Wang, Jixun

Yao, and

4 more authors

In ASRU, 2025

Songs, as a central form of musical art, exemplify the richness of human intelligence and creativity. While recent advances in generative modeling have enabled notable progress in long-form song generation, current systems for full-length song synthesis still face major challenges, including data imbalance, insufficient controllability, and inconsistent musical quality. DiffRhythm, a pioneering diffusion-based model, advanced the field by generating full-length songs with expressive vocals and accompaniment. However, its performance was constrained by an unbalanced model training dataset and limited controllability over musical style, resulting in noticeable quality disparities and restricted creative flexibility. To address these limitations, we propose DiffRhythm+, an enhanced diffusion-based framework for controllable and flexible full-length song generation. DiffRhythm+ leverages a substantially expanded and balanced training dataset to mitigate issues such as repetition and omission of lyrics, while also fostering the emergence of richer musical skills and expressiveness. The framework introduces a multi-modal style conditioning strategy, enabling users to precisely specify musical styles through both descriptive text and reference audio, thereby significantly enhancing creative control and diversity. We further introduce direct performance optimization aligned with user preferences, guiding the model toward consistently preferred outputs across evaluation metrics. Extensive experiments demonstrate that DiffRhythm+ achieves significant improvements in naturalness, arrangement complexity, and listener satisfaction over previous systems.

-

Drop the beat! Freestyler for Accompaniment Conditioned Rapping Voice Generation

Ziqian

Ning, Shuai

Wang, Yuepeng

Jiang, Jixun

Yao, Lei

He, Shifeng

Pan, and

2 more authors

In AAAI, 2025

Rap, a prominent genre of vocal performance, remains underexplored in vocal generation. General vocal synthesis depends on precise note and duration inputs, requiring users to have related musical knowledge, which limits flexibility. In contrast, rap typically features simpler melodies, with a core focus on a strong rhythmic sense that harmonizes with accompanying beats. In this paper, we propose Freestyler, the first system that generates rapping vocals directly from lyrics and accompaniment inputs. Freestyler utilizes language model-based token generation, followed by a conditional flow matching model to produce spectrograms and a neural vocoder to restore audio. It allows a 3-second prompt to enable zero-shot timbre control. Due to the scarcity of publicly available rap datasets, we also present RapBank, a rap song dataset collected from the internet, alongside a meticulously designed processing pipeline. Experimental results show that Freestyler produces high-quality rapping voice generation with enhanced naturalness and strong alignment with accompanying beats, both stylistically and rhythmically.

-

StableVC: Style Controllable Zero-Shot Voice Conversion with Conditional Flow Matching

Jixun

Yao, Yang

Yuguang, Yu

Pan, Ziqian

Ning, Jianhao

Ye, Hongbin

Zhou, and

1 more author

In AAAI, 2025

Zero-shot voice conversion (VC) aims to transfer the timbre from the source speaker to an arbitrary unseen speaker while preserving the original linguistic content. Despite recent advancements in zero-shot VC using language model-based or diffusion-based approaches, several challenges remain: 1) current approaches primarily focus on adapting timbre from unseen speakers and are unable to transfer style and timbre to different unseen speakers independently; 2) these approaches often suffer from slower inference speeds due to the autoregressive modeling methods or the need for numerous sampling steps; 3) the quality and similarity of the converted samples are still not fully satisfactory. To address these challenges, we propose a style controllable zero-shot VC approach named StableVC, which aims to transfer timbre and style from source speech to different unseen target speakers. Specifically, we decompose speech into linguistic content, timbre, and style, and then employ a conditional flow matching module to reconstruct the high-quality mel-spectrogram based on these decomposed features. To effectively capture timbre and style in a zero-shot manner, we introduce a novel dual attention mechanism with an adaptive gate, rather than using conventional feature concatenation. With this non-autoregressive design, StableVC can efficiently capture the intricate timbre and style from different unseen speakers and generate high-quality speech significantly faster than real-time. Experiments demonstrate that our proposed StableVC outperforms state-of-the-art baseline systems in zero-shot VC and achieves flexible control over timbre and style from different unseen speakers. Moreover, StableVC offers approximately 25x and 1.65x faster sampling compared to autoregressive and diffusion-based baselines.

-

ZSVC: Zero-shot Style Voice Conversion with Disentangled Latent Diffusion Models and Adversarial Training

Xinfa

Zhu, Lei

He, Yujia

Xiao, Xi

Wang, Xu

Tan, Sheng

Zhao, and

1 more author

In ICASSP, 2025

Style voice conversion aims to transform the speaking style of source speech into a desired style while keeping the original speaker’s identity. However, previous style voice conversion approaches primarily focus on well-defined domains such as emotional aspects, limiting their practical applications. In this study, we present ZSVC, a novel Zero-shot Style Voice Conversion approach that utilizes a speech codec and a latent diffusion model with speech prompting mechanism to facilitate in-context learning for speaking style conversion. To disentangle speaking style and speaker timbre, we introduce information bottleneck to filter speaking style in the source speech and employ Uncertainty Modeling Adaptive Instance Normalization (UMAdaIN) to perturb the speaker timbre in the style prompt. Moreover, we propose a novel adversarial training strategy to enhance in-context learning and improve style similarity. Experiments conducted on 44,000 hours of speech data demonstrate the superior performance of ZSVC in generating speech with diverse speaking styles in zero-shot scenarios.

-

CAMEL: Cross-Attention Enhanced Mixture-of-Experts and Language Bias for Code-Switching Speech Recognition

He

Wang, Xucheng

Wan, Naijun

Zheng, Kai

Liu, Huan

Zhou, Guojian

Li, and

1 more author

In ICASSP, 2025

Code-switching automatic speech recognition (ASR) aims to transcribe speech that contains two or more languages accurately. To better capture language-specific speech representations and address language confusion in code-switching ASR, the mixture-of-experts (MoE) architecture and an additional language diarization (LD) decoder are commonly employed. However, most researches remain stagnant in simple operations like weighted summation or concatenation to fuse languagespecific speech representations, leaving significant opportunities to explore the enhancement of integrating language bias information. In this paper, we introduce CAMEL, a cross-attention-based MoE and language bias approach for code-switching ASR. Specifically, after each MoE layer, we fuse language-specific speech representations with cross-attention, leveraging its strong contextual modeling abilities. Additionally, we design a source attention-based mechanism to incorporate the language information from the LD decoder output into text embeddings. Experimental results demonstrate that our approach achieves state-of-the-art performance on the SEAME, ASRU200, and ASRU700+LibriSpeech460 Mandarin-English code-switching ASR datasets.

-

HDMoLE: Mixture of LoRA Experts with Hierarchical Routing and Dynamic Thresholds for Fine-Tuning LLM-based ASR Models

Bingshen

Mu, Kun

Wei, Qijie

Shao, Yong

Xu, and Lei

Xie

In ICASSP, 2025

Recent advancements in integrating Large Language Models (LLM) with automatic speech recognition (ASR) have performed remarkably in general domains. While supervised fine-tuning (SFT) of all model parameters is often employed to adapt pre-trained LLM-based ASR models to specific domains, it imposes high computational costs and notably reduces their performance in general domains. In this paper, we propose a novel parameter-efficient multi-domain fine-tuning method for adapting pre-trained LLM-based ASR models to multi-accent domains without catastrophic forgetting named \textitHDMoLE, which leverages hierarchical routing and dynamic thresholds based on combining low-rank adaptation (LoRA) with the mixer of experts (MoE) and can be generalized to any linear layer. Hierarchical routing establishes a clear correspondence between LoRA experts and accent domains, improving cross-domain collaboration among the LoRA experts. Unlike the static Top-K strategy for activating LoRA experts, dynamic thresholds can adaptively activate varying numbers of LoRA experts at each MoE layer. Experiments on the multi-accent and standard Mandarin datasets demonstrate the efficacy of HDMoLE. Applying HDMoLE to an LLM-based ASR model projector module achieves similar performance to full fine-tuning in the target multi-accent domains while using only 9.6% of the trainable parameters required for full fine-tuning and minimal degradation in the source general domain.

-

DiffAttack: Diffusion-based Timbre-reserved Adversarial Attack in Speaker Identification

Qing

Wang, Jixun

Yao, Zhaokai

Sun, Pengcheng

Guo, Lei

Xie, and John H.L.

Hansen

In ICASSP, 2025

Being a form of biometric identification, the security of the speaker identification (SID) system is of utmost importance. To better understand the robustness of SID systems, we aim to perform more realistic attacks in SID, which are challenging for both humans and machines to detect. In this study, we propose DiffAttack, a novel timbre-reserved adversarial attack approach that exploits the capability of a diffusion-based voice conversion (DiffVC) model to generate adversarial fake audio with distinct target speaker attribution. By introducing adversarial constraints into the generative process of the diffusion-based voice conversion model, we craft fake samples that effectively mislead target models while preserving speaker-wise characteristics. Specifically, inspired by the use of randomly sampled Gaussian noise in conventional adversarial attacks and diffusion processes, we incorporate adversarial constraints into the reverse diffusion process. These constraints subtly guide the reverse diffusion process toward aligning with the target speaker distribution. Our experiments on the LibriTTS dataset indicate that DiffAttack significantly improves the attack success rate compared to vanilla DiffVC and other methods. Moreover, objective and subjective evaluations demonstrate that introducing adversarial constraints does not compromise the speech quality generated by the DiffVC model.

-

GenSE: Generative Speech Enhancement via Language Models using Hierarchical Modeling

Jixun

Yao, Hexin

Liu, Chen

Chen, Yuchen

Hu, EngSiong

Chng, and Lei

Xie

In ICLR, 2025

Semantic information refers to the meaning conveyed through words, phrases, and contextual relationships within a given linguistic structure. Humans can leverage semantic information, such as familiar linguistic patterns and contextual cues, to reconstruct incomplete or masked speech signals in noisy environments. However, existing speech enhancement (SE) approaches often overlook the rich semantic information embedded in speech, which is crucial for improving intelligibility, speaker consistency, and overall quality of enhanced speech signals. To enrich the SE model with semantic information, we employ language models as an efficient semantic learner and propose a comprehensive framework tailored for language model-based speech enhancement, called \textitGenSE. Specifically, we approach SE as a conditional language modeling task rather than a continuous signal regression problem defined in existing works. This is achieved by tokenizing speech signals into semantic tokens using a pre-trained self-supervised model and into acoustic tokens using a custom-designed single-quantizer neural codec model. To improve the stability of language model predictions, we propose a hierarchical modeling method that decouples the generation of clean semantic tokens and clean acoustic tokens into two distinct stages. Moreover, we introduce a token chain prompting mechanism during the acoustic token generation stage to ensure timbre consistency throughout the speech enhancement process. Experimental results on benchmark datasets demonstrate that our proposed approach outperforms state-of-the-art SE systems in terms of speech quality and generalization capability.

-

EASY: Emotion-aware Speaker Anonymization via Factorized Distillation

Jixun

Yao, Hexin

Liu, Eng Siong

Chng, and Lei

Xie

In Interspeech, 2025

Emotion plays a significant role in speech interaction, conveyed through tone, pitch, and rhythm, enabling the expression of feelings and intentions beyond words to create a more personalized experience. However, most existing speaker anonymization systems employ parallel disentanglement methods, which only separate speech into linguistic content and speaker identity, often neglecting the preservation of the original emotional state. In this study, we introduce EASY, an emotion-aware speaker anonymization framework. EASY employs a novel sequential disentanglement process to disentangle speaker identity, linguistic content, and emotional representation, modeling each speech attribute in distinct subspaces through a factorized distillation approach. By independently constraining speaker identity and emotional representation, EASY minimizes information leakage, enhancing privacy protection while preserving original linguistic content and emotional state. Experimental results on the VoicePrivacy Challenge official datasets demonstrate that our proposed approach outperforms all baseline systems, effectively protecting speaker privacy while maintaining linguistic content and emotional state.

-

Leveraging LLM and Self-Supervised Training Models for Speech Recognition in Chinese Dialects: A Comparative Analysis

Tianyi

Xu, Hongjie

Chen, Wang

Qing, Lv

Hang, Jian

Kang, Li

Jie, and

3 more authors

In Interspeech, 2025

Large-scale training corpora have significantly improved the performance of ASR models. Unfortunately, due to the relative scarcity of data, Chinese accents and dialects remain a challenge for most ASR models. Recent advancements in self-supervised learning have shown that self-supervised pre-training, combined with large language models (LLM), can effectively enhance ASR performance in low-resource scenarios. We aim to investigate the effectiveness of this paradigm for Chinese dialects. Specifically, we pre-train a Data2vec2 model on 300,000 hours of unlabeled dialect and accented speech data and do alignment training on a supervised dataset of 40,000 hours. Then, we systematically examine the impact of various projectors and LLMs on Mandarin, dialect, and accented speech recognition performance under this paradigm. Our method achieved SOTA results on multiple dialect datasets, including Kespeech. We will open-source our work to promote reproducible research

-

Selective Invocation for Multilingual ASR: A Cost-effective Approach Adapting to Speech Recognition Difficulty

Hongfei

Xue, Yufeng

Tang, Jun

Zhang, Xuelong

Geng, and Lei

Xie

In Interspeech, 2025

Although multilingual automatic speech recognition (ASR) systems have significantly advanced, enabling a single model to handle multiple languages, inherent linguistic differences and data imbalances challenge SOTA performance across all languages. While language identification (LID) models can route speech to the appropriate ASR model, they incur high costs from invoking SOTA commercial models and suffer from inaccuracies due to misclassification. To overcome these, we propose SIMA, a selective invocation for multilingual ASR that adapts to the difficulty level of the input speech. Built on a spoken large language model (SLLM), SIMA evaluates whether the input is simple enough for direct transcription or requires the invocation of a SOTA ASR model. Our approach reduces word error rates by 18.7% compared to the SLLM and halves invocation costs compared to LID-based methods. Tests on three datasets show that SIMA is a scalable, cost-effective solution for multilingual ASR applications.

-

AISHELL-5: The First Open-Source In-Car Multi-Channel Multi-Speaker Speech Dataset for Automatic Speech Diarization and Recognition

Yuhang

Dai, He

Wang, Xingchen

Li, Zihan

Zhang, Shuiyuan

Wang, Lei

Xie, and

5 more authors

In Interspeech, 2025

This paper delineates AISHELL-5, the first open-source in-car multi-channel multi-speaker Mandarin automatic speech recognition (ASR) dataset. AISHLL-5 includes two parts: (1) over 100 hours of multi-channel speech data recorded in an electric vehicle across more than 60 real driving scenarios. This audio data consists of four far-field speech signals captured by microphones located on each car door, as well as near-field signals obtained from high-fidelity headset microphones worn by each speaker. (2) a collection of 40 hours of real-world environmental noise recordings, which supports the in-car speech data simulation. Moreover, we also provide an open-access, reproducible baseline system based on this dataset. This system features a speech frontend model that employs speech source separation to extract each speaker’s clean speech from the far-field signals, along with a speech recognition module that accurately transcribes the content of each individual speaker. Experimental results demonstrate the challenges faced by various mainstream ASR models when evaluated on the AISHELL-5. We firmly believe the AISHELL-5 dataset will significantly advance the research on ASR systems under complex driving scenarios by establishing the first publicly available in-car ASR benchmark.

Professional Services

Awards

- 1st Place, ICASSP 2026 Automatic Song Aesthetics Evaluation Challenge

- 3rd Place, Single Track, Interspeech 2026 Audio Reasoning Challenge

- 1st Place, In-Domain Singing Style Conversion Track, ASRU 2025 The Singing Voice Conversion Challenge

- 1st Place, Zero-Shot Singing Style Conversion Track, ASRU 2025 The Singing Voice Conversion Challenge

- 1st Place, General Audio Source Separation Track, NCMMSC 2025 CCF Advanced Audio Technology Competition

- 2nd Place, Target Speaker Lipreading Track, ICME 2024 Chat-scenario Chinese Lipreading (ChatCLR) Challenge

- 1st Place, Source Speaker Verification Against Voice Conversion Track, SLT 2024 Source Speaker Tracing Challenge(SSTC)

- 1st Place, ICASSP 2024 Packet Loss Concealment (PLC) Challenge

- 2nd Place, Real-time Track, ICASSP 2024 Speech Signal Improvement Challenge

- 3rd Place, Non-real-time Track, ICASSP 2024 Speech Signal Improvement Challenge

- 2nd Place, ICASSP 2024 Multimodal Information based Speech Processing (MISP) Challenge

- 1st Place, 2024 Shenghua Cup Acoustic Technology Competition

- 1st Place, Single-Speaker VSR Track, NCMMSC 2024 Chinese Continuous Visual Speech Recognition Challenge (CNVSRC)

- 1st Place, Multi-Speaker VSR Track, NCMMSC 2024 Chinese Continuous Visual Speech Recognition Challenge (CNVSRC)

- 1st Place, SLT 2024 Low-Resource Dysarthria Wake-Up Word Spotting Challenge(LRDWWS Challenge)

- 1st Place, Speech-to-Speech Translation (Offline) Track, ACL 2023 Speech-to-Speech Translation (S2ST)

- 1st Place, Any-to-one, In-domain Singing Voice Conversion Track, ASRU 2023 The Singing Voice Conversion Challenge

- 2nd Place, Any-to-one, Cross-domain Singing Voice Conversion Track, ASRU 2023 The Singing Voice Conversion Challenge

- 2nd Place, Audio-Visual Target Speaker Extraction (AVTSE) Track, ICASSP 2023 Multi-modal Information based Speech Processing (MISP) Challenge

- 1st Place, UDASE (Unsupervised Domain Adaptation for Speech Enhancement) Track, Interspeech 2023 CHiME Speech Separation and Recognition Challenge (CHiME-7)

- 1st Place, Non-personalized AEC Track, ICASSP 2023 Acoustic Echo Cancellation Challenge (AEC Challenge)

- 2nd Place, Personalized AEC Track, ICASSP 2023 Acoustic Echo Cancellation Challenge (AEC Challenge)

- 2nd Place, Audio-Visual Diarization & Recognition Track, ICASSP 2023 Multimodal Information based Speech Processing (MISP) - Challenge

- 3rd Place, Audio-Visual Speaker Diarization Track, ICASSP 2023 Multimodal Information based Speech Processing (MISP) Challenge

- 1st Place, Headset Speech Enhancement Track, ICASSP 2023 Deep Noise Suppression Challenge

- 1st Place, Speakerphone Speech Enhancement Track, ICASSP 2023 Deep Noise Suppression Challenge

- 1st Place, Speech Enhancement Track, 2023 Shenghua Cup Acoustic Technology Competition

- 1st Place, ASRU 2023 MultiLingual Speech processing Universal PERformance Benchmark (SUPERB)

- 1st Place, Single-Speaker VSR Track, NCMMSC 2023 Chinese Continuous Visual Speech Recognition Challenge (CNVSRC)

- 1st Place, Multi-Speaker VSR Track, NCMMSC 2023 Chinese Continuous Visual Speech Recognition Challenge (CNVSRC)

- 1st Place, Speaker Anonymization Track, Interspeech 2022 VoicePrivacy 2022 Challenge (VPC 2022)

- 2nd Place, Fully-supervised Track, Interspeech 2022 Far-field Speaker Verification Challenge (FFSVC)

- 2nd Place, Semi-supervised Track, Interspeech 2022 Far-field Speaker Verification Challenge (FFSVC)

- 2nd Place, ISCSLP 2022 Magichub Code-Switching ASR Challenge

- 3rd Place, ISCSLP 2022 Conversational Short-phrase Speaker Diarization Challenge

- 1st Place, Constrained Track, O-COCOSDA 2022 Indic Multilingual Speaker Verification Challenge (I-MSV)

- 3rd Place, Unconstrained Track, O-COCOSDA 2022 Indic Multilingual Speaker Verification Challenge (I-MSV)

- 3rd Place, NCMMSC 2022 Low-resource Mongolian Text-to-Speech Challenge

- 2nd Place, Training with VoxCeleb 1/2 Only Track, VoxSRC 2021 Workshop 2021 VoxCeleb Speaker Recognition Challenge (VoxSRC)

- 2nd Place, Additional Public Data Allowed (e.g., MUSAN, RIR) Track, VoxSRC 2021 Workshop 2021 VoxCeleb Speaker Recognition - Challenge (VoxSRC)

- 3rd Place, Real-Time Wideband Speech Enhancement Track, Interspeech 2021 Deep Noise Suppression Challenge (DNS Challenge)

- 3rd Place, Real-Time AEC & Speech Enhancement Track, Interspeech 2021 Acoustic Echo Cancellation Challenge (AEC Challenge)

- 1st Place, Close-talking Single-channel Track, ISCSLP 2021 Personalized Voice Trigger Challenge (PVTC)

- 1st Place, Real-Time Wideband Speech Enhancement Track, Interspeech 2020 Deep Noise Suppression Challenge (DNS Challenge)

- 2nd Place, Non-Real-Time Wideband Speech Enhancement Track, Interspeech 2020 Deep Noise Suppression Challenge (DNS Challenge)

- 1st Place, Closed-set Word-level Audio-Visual Speech Recognition Track, ICMI 2019 Mandarin Audio-Visual Speech Recognition - Challenge

- 3rd Place, Interspeech 2018 CHiME Speech Separation and Recognition Challenge (CHiME-5)

- 2nd Place, Unsupervised Subword Unit Modeling Track, Interspeech 2017 Zero Resource Speech Challenge

- 1st Place, Spoken Term Discovery Track, Interspeech 2015 Zero Resource Speech Challenge

- 1st Place, QUESST (Query-by-Example Speech Search) Track, MediaEval Multimedia Benchmark Workshop 2015 Query-by-Example Search on Speech Task (QUESST)

- 2nd Place, QUESST (Query-by-Example Speech Search) Track, MediaEval Multimedia Benchmark Workshop 2014 Query-by-Example Search on Speech Task (QUESST)